These three terms are frequently confused in reliability work, observability discussions, and customer commitments. This version separates the number you track, the standard you aim for, and the agreement you may publish externally, so teams can document each layer more clearly.

Quick List: SLI vs SLO vs SLA In Plain English

Use this section for the fast explanation before moving into the detailed definitions.

1. SLI (Service Level Indicator)

The measurable number you observe. It is the quantitative signal that tells you what level of service users are actually getting.

- 📏What it is: A defined metric such as success rate, latency, error rate, or freshness.

- 💡Think: What number best reflects what the user experiences?

- 🧪Example: Percentage of successful requests out of all requests.

2. SLO (Service Level Objective)

The target you want the SLI to meet across a specified window. This is the internal standard for acceptable reliability.

- 🎯What it is: A target value or range tied to an SLI and a time period.

- 💡Think: How good is good enough, and over what period?

- 🧪Example: Success rate stays at or above X% over 30 rolling days.

3. SLA (Service Level Agreement)

The external promise. It often uses one or more SLO-style commitments, but it becomes an SLA when outcomes are defined for misses.

- 📜What it is: A customer-facing agreement with measurement language and remedies.

- 💡Think: What exactly have we promised externally?

- 🧪Example: If availability falls below X% in a month, service credits apply.

Fast rule to avoid confusion

SLI is the measurement, SLO is the target, and SLA is the promise with consequences. If the miss only causes internal follow-up, you are usually looking at an SLO rather than an SLA.

SLO vs SLA vs SLI Comparison Table

This side-by-side view makes it easier to see who usually owns each layer and what happens when performance falls short.

| Term | Meaning | Core purpose | Typical owner | If missed |

|---|---|---|---|---|

| SLI | A quantitative indicator of service level. | Show what users are actually receiving. | Engineering, SRE, platform teams | No direct consequence because it is only the metric. |

| SLO | A target for an SLI over a defined time window. | Set an internal standard for reliability. | Engineering, product, stakeholder input | Triggers internal action, prioritization, or changes in release plans. |

| SLA | A customer-facing agreement that may include SLOs and remedies. | Set and enforce an external commitment. | Product, legal, leadership, SRE input | Credits, penalties, escalations, or other remedies apply. |

Important nuance

Teams often say “SLA” when they really mean an internal response time target inside a support desk. If there is no customer remedy attached, calling it an SLO is usually more accurate and less confusing.

Break Down Each Term

Use the selector to switch between the measurement, the objective, and the agreement without changing the overall layout.

Definition

An SLI is a clearly defined quantitative measure of the service level you provide. The number needs to be explicit enough that different people would calculate it the same way.

Example

What a strong version includes

- A signal tied to user experience

- Clear scope, exclusions, and traffic definition

- Consistent measurement logic

What it is not

- Not the target by itself

- Not a contract

- Not a vague internal health metric with no user context

SLO Error Budgets Explained

Where to add it:

Place this immediately after the “What is an SLO (Service Level Objective)” section and before “What is an SLA.”

Content:

An SLO by itself defines your reliability target. An error budget defines how much unreliability you are allowed.

If your SLO is 99.9% success rate over 30 days, your error budget is 0.1% over that same window.

Error Budget = 100% − SLO

If you burn through the entire error budget early in the window, you have exceeded your acceptable reliability risk. That should trigger internal action.

Error budgets are used to:

- Decide when to slow down feature releases

- Shift focus from shipping to reliability work

- Align product and engineering priorities

- Prevent over-engineering when reliability is already good enough

Without error budgets, SLOs become passive numbers. With error budgets, SLOs become decision frameworks.

This concept is central in modern reliability practices and helps teams balance speed and stability.

How to Choose the Right SLI and SLO

Where to add it:

Place this right after the “How SLIs, SLOs, and SLAs Fit Together” section and before “Examples You Can Reuse.”

Content:

Choosing the wrong SLI makes every SLO and SLA built on top of it meaningless.

Start by asking: what does the user actually experience?

Good SLIs usually measure:

- Successful requests from the user’s perspective

- Latency that users actually feel

- Data freshness where delays impact decisions

- End-to-end behavior, not just internal service health

Avoid internal system metrics that do not map to user impact. CPU usage is rarely a good SLI. Request success rate often is.

When choosing an SLO:

- Make it realistic but meaningful

- Avoid chasing perfection if users cannot tell the difference

- Include a clear time window

- Make sure measurement is automated and consistent

If you cannot explain why the SLO matters to a customer or stakeholder, it is likely the wrong target.

How SLIs, SLOs, And SLAs Fit Together

The cleanest sequence is to define the signal first, then the target, and only after that decide whether anything should become a customer commitment.

Step 1: Define the SLI

Start with the measurement that best represents what users actually experience.

- 📌Choose user-facing signals such as success, latency, or freshness.

- 📌Write down scope and exclusions clearly.

Step 2: Set the SLO

Add a target and a time window so the measurement becomes an operating standard rather than a passive number.

- 📌Pick a threshold and a period.

- 📌Use it to balance reliability work and delivery speed.

Step 3: Decide if it becomes an SLA

Only move to an SLA when the measurement language is explicit and the remedies can be applied consistently.

- 📌Define exclusions, credits, penalties, or escalation paths.

- 📌Keep an internal buffer where needed.

The consequence test

If missing the threshold creates a defined remedy or penalty, you are in SLA territory. If it only changes internal priorities or triggers operational follow-up, it is still an SLO.

Examples You Can Reuse

These examples show how the same service concept changes depending on whether you are describing the measurement, the objective, or the agreement.

Example 1: API request success

A good fit for web apps, APIs, and services where users mainly care that the request completes successfully.

- SLISuccess rate equals good requests divided by total requests.

- SLOSuccess rate remains at or above X% over 30 rolling days.

- SLAIf the rate falls below X% in a calendar month, remedies apply.

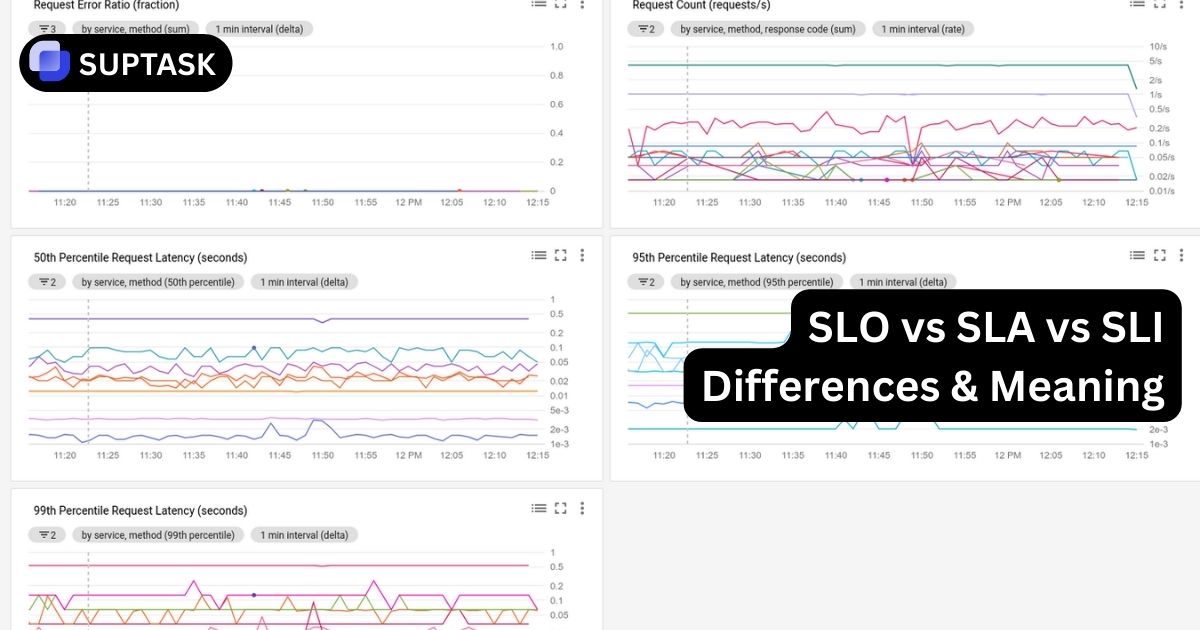

Example 2: Latency at percentile

Useful when users feel slowness before they notice outright failures, especially on critical endpoint groups.

- SLIp95 request latency for a defined endpoint set.

- SLOp95 latency stays below Y ms over a rolling 28 days.

- SLAIf the published latency commitment is breached in a month, remedies may apply.

Common mistakes to avoid

Weak definitions cause most of the confusion here. Vague “uptime” language, targets with no time window, or agreements without precise measurement rules all make these terms harder to use safely.

Error Budgets And Choosing The Right SLI

Once the terminology is clear, the next step is turning it into an operating model that helps teams make better reliability decisions.

SLO Error Budgets Explained

An SLO defines the target. The error budget defines how much unreliability is allowed inside that same window before the team should change course.

- ⚖️Helps teams decide when to slow releases.

- ⚖️Aligns product and engineering on acceptable risk.

- ⚖️Stops SLOs from becoming passive dashboard numbers.

How to choose the right SLI and SLO

The wrong SLI makes every SLO and SLA built on top of it weaker. Start with what the user actually feels, not only what is easiest to graph.

- ✅Use signals like successful requests, felt latency, or data freshness.

- ✅Make sure the scope and window are explicit.

- ✅Keep the measurement automated and consistent.

- ⚠️Avoid internal health numbers that do not clearly map to customer impact.

FAQ: SLI, SLO, And SLA Questions People Ask

These short answers work well when you need the distinction quickly without reading the whole page.

Use SLI to measure, SLO to manage, and SLA to commit

That distinction keeps engineering targets, operational standards, and customer commitments from collapsing into the same label. If you want the contractual side next, continue with Suptask’s guide to service level agreement best practices.

Back to top